Enter your evaluation code to mint time-limited downloads.

mkdir -p ~/lokogate

cd ~/lokogate.sha256 file in this folder and verify integrity.sha256sum -c *.sha256python3 -m venv .venv

source .venv/bin/activate

pip install -U pip

pip install ./*.whl

lokogate --versionlokogate init --workspace "$(pwd)"

lokogate run \

--workspace "$(pwd)" \

--gate "$(pwd)/policies/gate_benchmark_v1.yaml" \

--weights /ABS/PATH/TO/weights.pt \

--evidence-dir /ABS/PATH/TO/evidence_root \

--evidence-id customer_val_01 \

--device 0 \

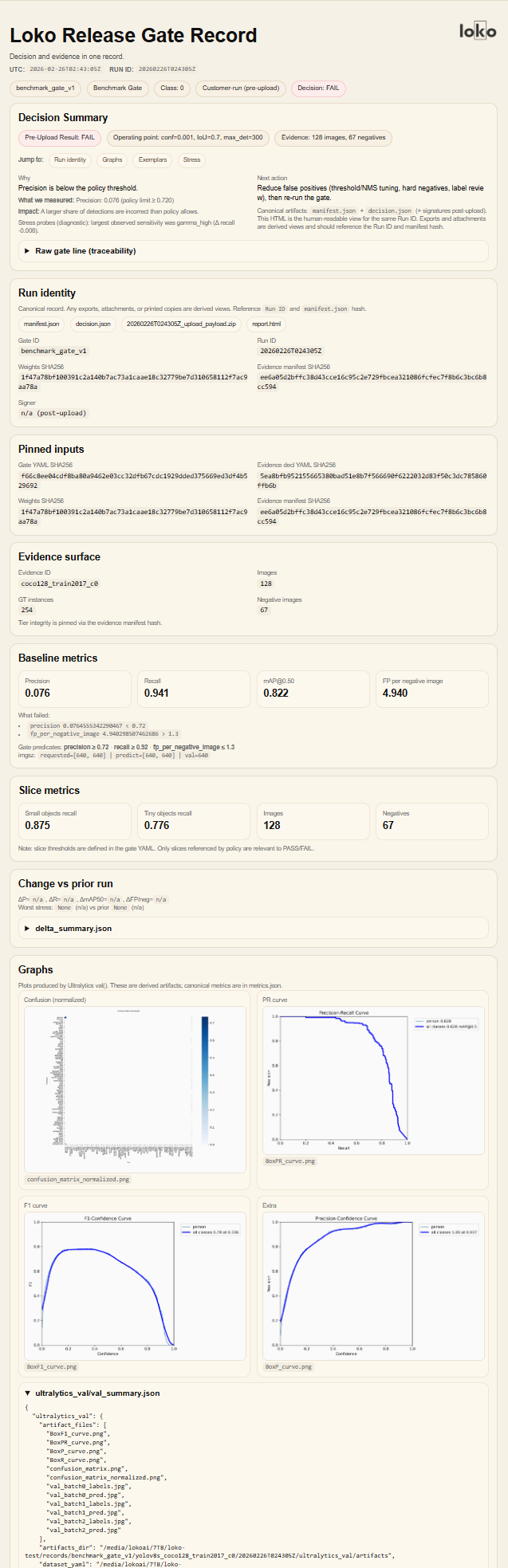

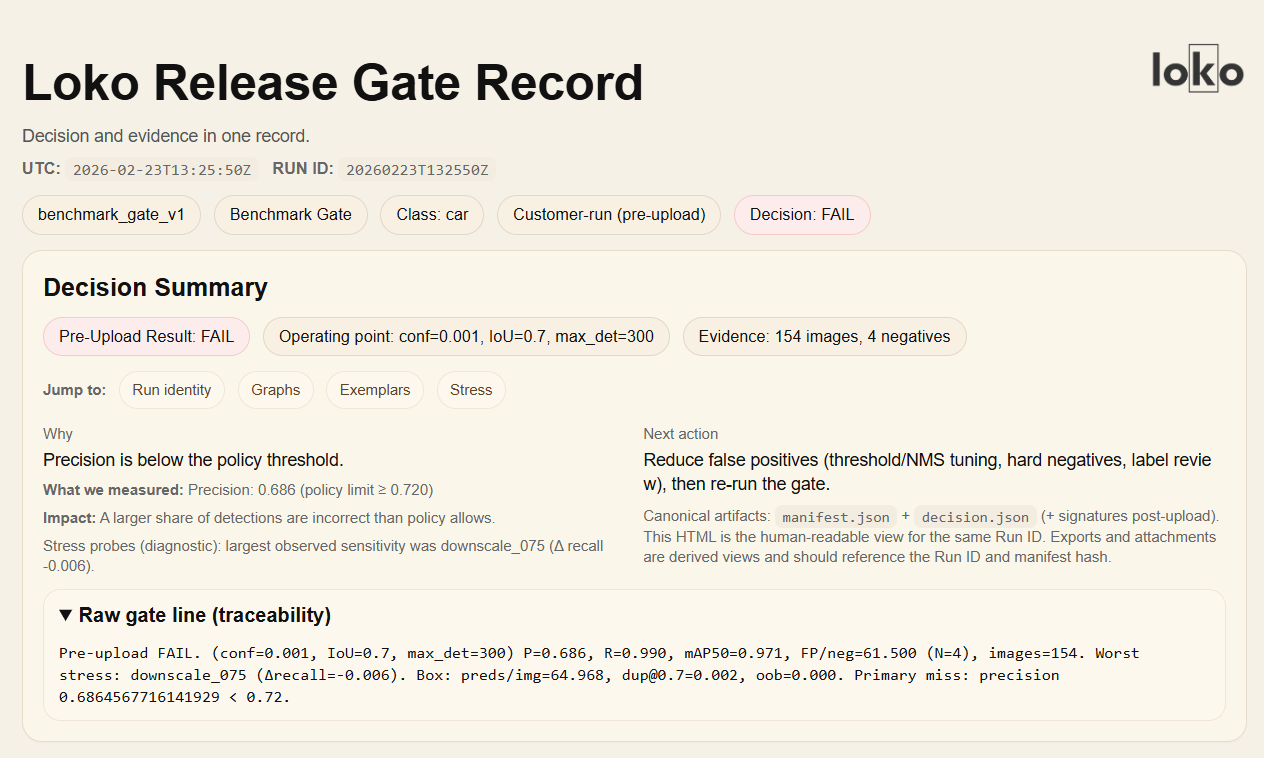

--model-id model_v1$(pwd)/records/<gate_id>/<model_id>/<RUN_ID>/bundle/<RUN_ID>_upload_payload.zipreport/report.html

bundle/<RUN_ID>_upload_payload.zipYYYYMMDDThhmmssZ<RUN_ID>_release.loko.zip<RUN_ID>_release.loko.zip.sha256